Strategic Object Storage Analysis: Technical Evolution and Market Dynamics of AWS S3, Azure Blob, and Google Cloud Storage for 2026 Enterprise Architectures

The global landscape of object storage has transitioned from a passive archival utility to a high-performance foundation for the generative artificial intelligence (AI) lifecycle. As organizations enter 2026, the architectural choice between Amazon Simple Storage Service (S3), Azure Blob Storage, and Google Cloud Storage (GCS) is no longer dictated solely by cost per gigabyte, but by the ability of the storage layer to integrate with large-scale model training, support "agentic scale" workloads, and satisfy increasingly stringent digital sovereignty requirements. The functional gap between these hyperscalers has narrowed, shifting the competitive focus toward the "operational tax" each platform levies on engineering teams and the depth of their respective ecosystems.

The Transformation of Object Storage into the AI Filesystem

Object storage platforms are fundamentally designed to handle massive volumes of unstructured data through a flat namespace, utilizing unique identifiers for data retrieval rather than the hierarchical structure of traditional file systems. However, the emergence of AI and high-performance computing (HPC) has forced an evolution in these architectures. AWS S3 maintains its market dominance through an expansive catalog of eight storage classes, emphasizing maximum flexibility and control for complex optimization scenarios. Azure Blob Storage emphasizes deep integration with the Microsoft ecosystem and a storage account structure that provides a balance between global accessibility and administrative boundaries. Google Cloud Storage prioritizes intuitive simplicity, offering four storage classes that map directly to access frequency, backed by a global network backbone that minimizes latency across regions.

The convergence of object storage and high-performance file systems is a defining trend of 2025 and 2026. This shift is necessitated by AI/ML workloads that generate millions of I/O requests, often synchronizing across large clusters of GPUs or TPUs that cannot afford to remain idle while waiting for data. The result is a new class of "high-performance object storage" that delivers single-digit millisecond latency, once the exclusive domain of block or local NVMe storage.

Core Architectural Comparison

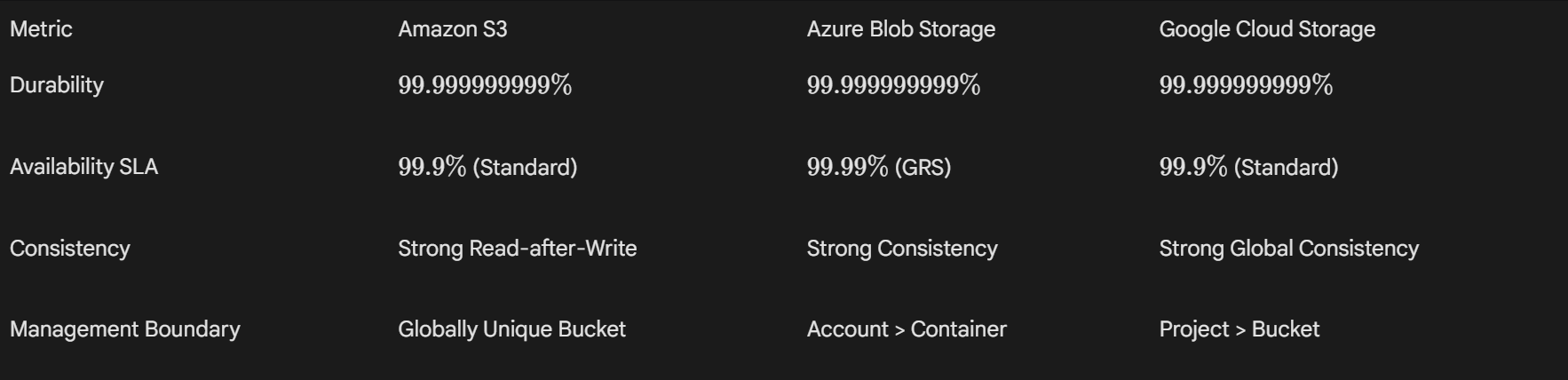

All three major providers guarantee exceptional durability, frequently cited at eleven nines (99.999999999%), effectively rendering data loss statistically improbable over a century of operation. The differentiation arises in availability Service Level Agreements (SLAs) and the mechanism of data distribution.

AWS S3 utilizes a global namespace for bucket names, requiring careful planning to avoid naming conflicts, whereas Azure uses a storage account-plus-container structure that allows for more flexible internal naming within the account boundary. GCP offers strong global consistency, making it particularly attractive for high-throughput analytics where data must be immediately available across all regions after a write operation.

High-Performance Object Storage for AI and ML Workloads

The infrastructure supporting AI workloads has seen massive upgrades, as the performance requirements for training large language models (LLMs) and performing real-time inference have outpaced standard object storage capabilities. Enterprise data must now be converted to AI-ready formats with minimal latency to prevent GPU starvation.

AWS S3 Express One Zone and S3 Tables

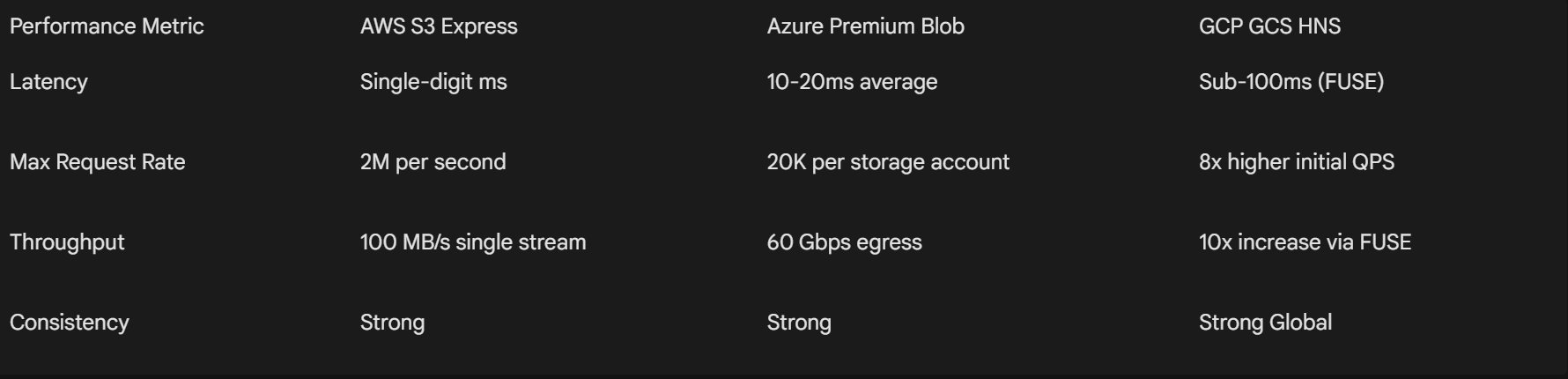

AWS S3 Express One Zone is a purpose-built storage class designed for the most frequently accessed data, offering up to 10x faster access speeds and 80% lower request costs compared to S3 Standard. By co-locating storage within a specific Availability Zone, AWS enables single-digit millisecond request latency, which is essential for iterative ML model training and high-performance computing (HPC).

In late 2025, AWS expanded its high-performance portfolio with S3 Tables, which provides analytics-optimized storage. These tables support automatic compaction, merging smaller objects into larger ones to improve query performance—a critical feature for data lakes where millions of small files can otherwise degrade performance. S3 Tables now includes replication support across Regions and accounts, alongside Intelligent-Tiering to optimize costs for analytics datasets.

The shift toward S3 directory buckets is a notable architectural change. These buckets only allow objects stored in the S3 Express One Zone storage class and support up to 2 million requests per second (RPS). This represents a paradigm shift from traditional S3 buckets, which are optimized for regional distribution, to a model where storage is tightly coupled with compute resources for maximum throughput.

Azure Scaled Accounts and Premium Blob Storage

Azure has evolved its storage architecture to support the massive concurrency required by entities like OpenAI for frontier model training. Azure Blob "scaled accounts" allow storage to scale across hundreds of scale units within a single region, capable of handling tens of terabits per second (Tbps) in throughput and millions of IOPS. Benchmarks demonstrated a single storage account scaling to over 50 Tbps on read throughput, a necessary threshold for feeding massive GPU clusters.

For latency-sensitive RAG (Retrieval-Augmented Generation) agents, Azure's Premium Block Blob storage utilizes SSD-based media to deliver consistent low-latency retrieval, often up to 3x faster than standard tiers. Azure also introduced "Storage for AI," a portal experience that simplifies the connection between storage accounts and AI Foundry, enabling the generation of knowledge bases directly from stored documents.

Azure's 2026 vision includes the integration of Azure Boost Data Processing Units (DPUs), which are expected to provide step-function gains in storage speed while reducing per-unit energy consumption. Furthermore, Azure Container Storage (ACStor) is adopting a Kubernetes operator model for complex orchestration and has moved to an open-source codebase to foster community innovation.

Google Cloud Storage HNS Performance Benchmarks

Google's implementation of Hierarchical Namespaces (HNS) focuses on removing the I/O bottlenecks that can starve expensive TPU and GPU resources. Benchmarks indicate that HNS-enabled buckets offer up to 8x higher initial Queries Per Second (QPS) for read and write operations compared to flat buckets. When combined with Cloud Storage FUSE, which allows object buckets to be mounted as local file systems, organizations like AssemblyAI reported a 10x increase in throughput and a 15x improvement in overall training speed.

The move to HNS allows for atomic folder-level operations, such as renames and deletes, which are traditionally resource-intensive in object storage. For AI/ML data pipelines, this results in up to 20x faster checkpointing, as the storage system no longer needs to copy and delete individual objects to simulate a directory move.

Digital Sovereignty and the Rise of Sovereign Clouds

Digital sovereignty has emerged as a critical differentiator for enterprises operating under strict regulatory regimes, particularly in the European Union. Sovereignty extends beyond simple data residency to encompass operational autonomy, where the infrastructure and the data are shielded from non-local jurisdictional control.

The AWS European Sovereign Cloud and Dedicated Local Zones

In early 2026, AWS launched its European Sovereign Cloud, starting with a region in Brandenburg, Germany. This independent cloud is physically and logically separated from standard AWS Regions, ensuring that all customer data and metadata—including permissions, roles, and configurations—remain within the EU. Operations are handled exclusively by EU residents located in the EU, and the infrastructure has no critical dependencies on non-EU systems.

For organizations requiring even tighter control, AWS offers Dedicated Local Zones. These are fully managed by AWS but built for the exclusive use of a single customer or community and placed in a location specified by that customer, such as a private data center. By 2026, these Dedicated Local Zones support a broad range of services, including S3 Express One Zone and Amazon EBS, allowing for high-performance processing within a specific sovereign perimeter.

AWS AI Factories also play a role in sovereignty, enabling customers to deploy fully managed AI infrastructure in their own data centers. This helps governments and highly regulated enterprises accelerate AI initiatives while meeting strict data residency and security requirements.

Azure and Oracle Database Integration

Azure's approach to sovereignty and enterprise governance is bolstered by its extensive partnership with Oracle. Oracle Database@Azure brings Oracle's industry-leading database services natively into Azure data centers, which is available in 33 regions globally by late 2025. This allows enterprises to unify their critical transactional data with Azure's AI platforms without the data leaving the Azure environment, maintaining compliance and reducing latency.

Azure also utilizes Entra ID for unified identity and access control, providing a zero-trust foundation for managing sensitive datasets across hybrid and multicloud environments. Microsoft's regional policy requiring dual availability zones within each region further ensures resilient architecture and compliance with enterprise continuity standards.

Comprehensive Cost Analysis and FinOps Strategies

As data volumes grow exponentially, managing cloud storage costs has become a primary challenge for IT leadership. Hyperscalers have responded with sophisticated automation tools and, more recently, adjustments to egress fee structures.

Storage Tiering and Automation

Each provider offers multiple tiers to balance access speed and cost, with automation increasingly used to move data between these tiers based on real-world access patterns.

AWS S3 Intelligent-Tiering is particularly effective for unpredictable workloads, as it automatically moves objects between frequent and infrequent access tiers without retrieval fees. However, it incurs a monitoring and automation fee per object ($0.0025 per 1,000 objects), which can be significant for billions of small files.

Azure provides Hot, Cool, Cold, and Archive tiers with automated lifecycle management. Azure's Hot tier is often cited as being up to 20% cheaper than S3 Standard for frequent access ($0.0184 vs $0.023 per GB), making it attractive for high-traffic web applications.

GCS uses a straightforward model (Standard, Nearline, Coldline, and Archive) with intuitive minimum storage durations (None, 30, 90, and 365 days respectively). GCP also applies sustained-use discounts automatically, providing more predictable costs for steady workloads compared to the manual configuration required for AWS or Azure savings plans.

The Egress Fee Paradigm Shift

A landmark development in 2024 was the announcement by Google, AWS, and Microsoft regarding the removal of data egress fees. However, these changes are often restricted to customers migrating their data to another provider or back on-premises and intend to close their accounts entirely.

Google's policy requires an application for credits and a 60-day window to complete the migration. AWS's approach does not strictly require account closure but mandates the removal of all data from the account and is subject to "additional scrutiny" for repeated requests. For daily multicloud operations, standard egress fees typically ranging from $0.08 to $0.12 per GB remain a significant component of the total cost of ownership (TCO).

Sustainability and ESG Reporting Capabilities

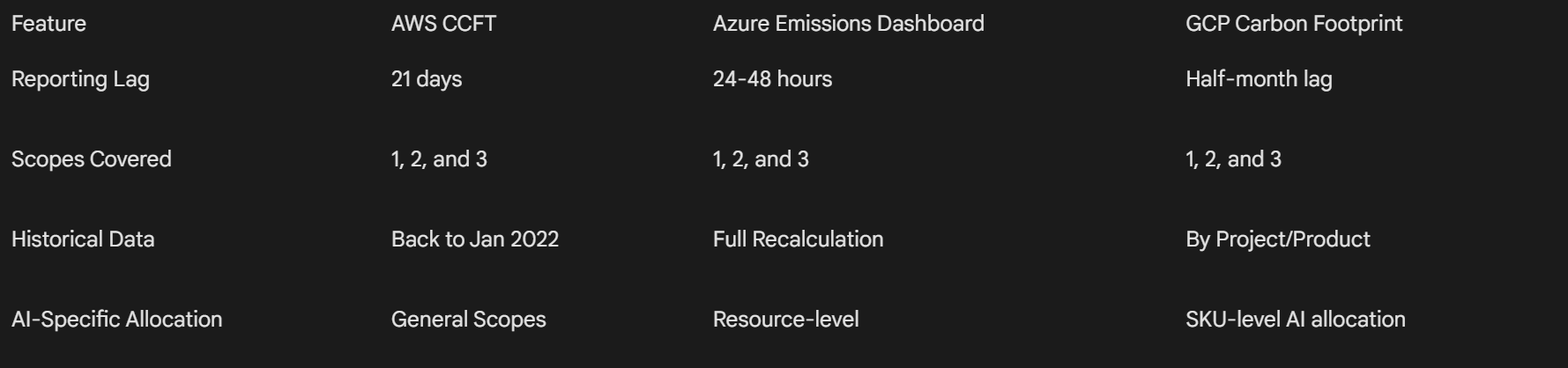

Environmental, Social, and Governance (ESG) reporting has transitioned from a voluntary disclosure to a regulatory necessity for many global enterprises. Cloud providers have invested heavily in tools that provide transparency into the carbon footprint of storage and compute resources.

AWS Customer Carbon Footprint Tool (CCFT)

By late 2025, AWS significantly improved the granularity and timeliness of its sustainability reporting. The CCFT now includes Scope 3 emissions—covering hardware manufacturing, building construction, and equipment transportation associated with cloud usage. AWS also reduced the data reporting lag from three months to 21 days or less.

AWS CCFT methodology has been third-party assured, and the dashboard maintains 38 months of historical data. For automated reporting, developers can use the AWS SDK to retrieve carbon data programmatically, integrating it into corporate sustainability dashboards.

Azure Emissions Impact Dashboard

Azure's Emissions Impact Dashboard, built on Power BI, provides transparency into greenhouse gas emissions associated with Azure and Microsoft 365 usage. The dashboard tracks actual and avoided emissions, utilizing a methodology validated by Stanford University.

Azure users can access emissions data for individual Azure resources through the Microsoft Cloud for Sustainability API. This granular data is accessible through Azure Carbon Optimization capabilities, helping teams understand the specific environmental impact of individual storage accounts or compute instances.

Google Cloud Carbon Footprint

Google Cloud offers dual-reporting of both location-based and market-based emissions, providing transparency into the actual grid mix versus the impact of renewable energy attribute certificates. Starting in January 2026, Google updated its methodology to specifically allocate AI inference model emissions to associated services at the SKU level.

This reporting solution uses data from Electricity Maps to measure grid emissions on an hourly basis, supporting Google's goal of 24/7 Carbon-Free Energy (CFE). The tool is integrated with the "unattended project recommender," which provides estimates of potential carbon reductions from deleting idle projects.

Data Management and Ecosystem Integration

The maturity of the ecosystem surrounding object storage often dictates its utility for complex enterprise workloads. AWS S3 benefits from nearly two decades of refinement, resulting in the broadest third-party tool support and a marketplace of over 10,000 solutions.

Analytics and Data Lakes

AWS S3 serves as the foundation for services like Athena for serverless queries, EMR for big data processing, and Redshift Spectrum for data warehousing. The integration of S3 Storage Lens provides organizations with a centralized dashboard to analyze storage trends and cost-efficiency across billions of prefixes.

Azure Data Lake Storage (ADLS) Gen2 is natively integrated with Azure Synapse Analytics and Power BI. ADLS Gen2's hierarchical namespace is specifically tuned for Hadoop-style workloads, allowing it to act more like a traditional file system for Spark and Databricks applications. Recent updates pushed disk performance for SAP HANA workloads to 780k IOPS and 16 GB/s throughput.

Google Cloud Storage is increasingly competitive in the analytics space, particularly through its deep integration with BigQuery. GCS is optimized for high-throughput data ingestion, and the new HNS feature allows BigQuery to perform federated queries across storage buckets with significantly reduced latency.

Hybrid and Multicloud Strategies

Hybrid cloud remains a reality for most enterprises, and object storage providers have expanded their on-premises connectivity options.

AWS offers the Snow Family of devices and AWS Outposts for extending S3 capabilities to edge locations and private data centers. Azure leads in hybrid capabilities through Azure Stack and Azure File Sync, allowing for seamless data movement between on-premises servers and the cloud. GCP focuses on portability and open-source standards, utilizing Anthos to manage storage and compute across multiple environments.

Security and Governance Frameworks

As object storage becomes the primary repository for enterprise intellectual property—particularly in the form of AI training datasets—security has been elevated to a top-tier architectural concern.

Identity and Access Management

AWS IAM is considered a highly granular system, allowing for detailed bucket and object-level policies, alongside S3 Access Points that simplify management for shared datasets. AWS also integrates S3 with Macie for automated sensitive data discovery and CloudTrail for comprehensive audit logging.

Azure leverages Microsoft Entra ID for unified authentication, which is valued for its support of existing Active Directory groups and zero-trust security models. Azure Defender for Storage provides advanced threat protection, identifying unusual access patterns or potential malware uploads.

Google Cloud Storage utilizes IAM for fine-grained controls and offers "Organization Policies" that can enforce security constraints across all buckets in a project or folder hierarchy. Google emphasizes encryption by default, ensuring all data is encrypted at rest and in transit.

Compliance and Durability Controls

All three platforms maintain comprehensive compliance certifications, including GDPR, HIPAA, SOC 1/2/3, and FedRAMP High. To combat ransomware, providers offer immutability features:

- AWS S3 Object Lock: Prevents an object from being deleted or overwritten for a fixed period or indefinitely.

- Azure Immutable Storage: Offers WORM (Write Once, Read Many) capabilities for blob data.

- GCP Bucket Lock: Enforces a retention policy on all objects within a bucket to ensure they cannot be modified.

Projections for 2026 and Beyond: Agentic Scale

As the industry moves through 2026, object storage is being redefined by the requirements of "agentic scale" workloads. This concept refers to the shift from human-driven applications to autonomous AI agents that generate massive, bursty I/O requests and require real-time data access at an unprecedented scale.

Technological Convergence

The distinction between object, file, and block storage is blurring. Azure's integration of Managed Lustre with Blob Storage and AWS's S3 Tables indicate a trend toward "unified storage platforms" that simplify data management across diverse workloads. Performance is no longer a trade-off for scalability; the use of Data Processing Units (DPUs) and specialized hardware accelerators like AWS Nitro and Azure Boost is providing step-function gains in storage throughput and energy efficiency.

For developers, the Azure Storage SDK version 2026-02-06 now supports Dynamic User Delegation SAS and Files Provisioned V2 Guardrails, reflecting the ongoing maturation of data protection mechanisms for cloud-native apps. AWS's Graviton5 processors deliver up to 25% higher performance than the previous generation, improving the efficiency of the underlying compute layer that manages storage requests.

Market Dynamics

AWS S3 continues to hold the largest market share (approx. 29-30%), followed by Azure Blob Storage (20%) and Google Cloud Storage (13%). While AWS is the benchmark for scale and breadth, Azure is capturing market share in the enterprise and regulated sectors through its hybrid offerings and Microsoft 365 integration. Google Cloud is increasingly attractive for AI-native startups and organizations focused on data analytics and high-speed networking.

The emergence of "sovereign-by-design" infrastructure, particularly the AWS European Sovereign Cloud and Dedicated Local Zones, suggests a future where cloud regions are not just geographic locations but distinct legal and operational entities. This will lead to a more fragmented but highly secure global cloud infrastructure, where enterprises can choose providers based on the specific jurisdictional protections they offer.

Strategic Conclusions

The strategic evaluation of object storage in 2026 requires a multidimensional analysis beyond basic capacity and cost.

AI/ML optimization is the primary driver for high-performance storage. Organizations prioritizing AI training should look toward AWS S3 Express One Zone and GCP's Hierarchical Namespace buckets for the performance gains necessary to keep GPUs utilized. For large-scale inferencing and RAG patterns, Azure Premium Blob Storage and the "Storage for AI" portal experience offer superior integration and low-latency characteristics.

Sovereignty and compliance are no longer optional. For highly regulated industries in the EU, the AWS European Sovereign Cloud provides the most robust isolation currently available, ensuring that even metadata remains within local jurisdiction. Organizations requiring deep hybrid integration will find Azure's partnership with Oracle and its hybrid management tools to be a significant advantage for unifying transactional and analytical data.

Financial efficiency requires a proactive FinOps strategy. Teams should leverage the automated tiering features of AWS Intelligent-Tiering and GCP's automatic sustained-use discounts to manage costs without manual intervention. The "zero egress" policies announced in 2024 should be viewed as exit incentives rather than a reduction in active multicloud operational costs.

ESG reporting is most granular on Google Cloud for those seeking SKU-level AI energy insights, while AWS and Azure offer highly accurate tools for Scope 3 emissions that are critical for corporate sustainability disclosures.

The choice between AWS, Azure, and Google Cloud Storage remains dependent on the organization's existing cloud commitment, the specific performance requirements of its AI roadmap, and the regulatory landscape of the regions in which it operates. By 2026, the object storage layer has truly become the "filesystem for AI," and its successful implementation is a prerequisite for enterprise digital transformation.